Docker Compose to Orchestrate Containers shows how to run two linked Docker containers using Docker Compose. Clustering Using Docker Swarm shows how to configure a Docker Swarm cluster.

This blog will show how to run a multi-container application created using Docker Compose in a Docker Swarm cluster.

Updated version of Docker Compose and Docker Swarm are released with Docker 1.7.0.

Docker 1.7.0 CLI

Get the latest Docker CLI:

|

1

2

3

|

curl https://get.docker.com/builds/Darwin/x86_64/docker-latest > /usr/local/bin/docker

|

and check the version as:

|

1

2

3

4

|

docker -v

Docker version 1.7.0, build 0baf609

|

Docker Machine 0.3.0

Get the latest Docker Machine as:

|

1

2

3

|

curl -L https://github.com/docker/machine/releases/download/v0.3.0/docker-machine_darwin-amd64 > /usr/local/bin/docker-machine

|

and check the version as:

|

1

2

3

4

|

docker-machine -v

docker-machine version 0.3.0 (0a251fe)

|

Docker Compose 1.3.0

Get the latest Docker Compose as:

|

1

2

3

4

|

curl -L https://github.com/docker/compose/releases/download/1.3.0/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose

chmod +x /usr/local/bin/docker-compose

|

and verify the version as:

|

1

2

3

4

5

6

|

docker-compose -v

docker-compose version: 1.3.0

CPython version: 2.7.9

OpenSSL version: OpenSSL 1.0.1j 15 Oct 2014

|

Docker Swarm 0.3.0

Swarm is run as a Docker container and can be downloaded as:

|

1

2

3

|

docker pull swarm

|

You can learn about Docker Swarm at docs.docker.com/swarm or Clustering using Docker Swarm.

Create Docker Swarm Cluster

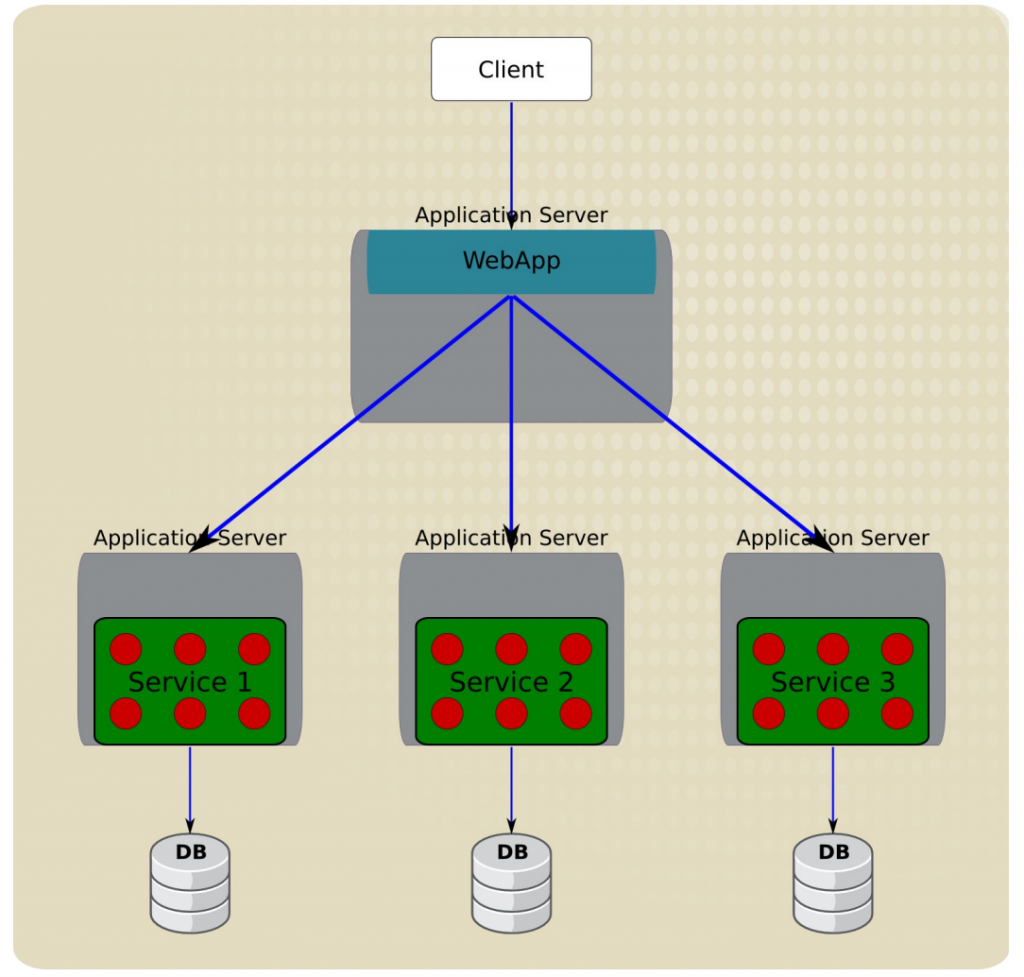

The key components of Docker Swarm are shown below:

and explained in Clustering Using Docker Swarm.

- The easiest way of getting started with Swarm is by using the official Docker image:

123docker run swarm create

This command returns a discovery token, referred as <TOKEN> in this document, and is the unique cluster id. It will be used when creating master and nodes later. This cluster id is returned by the hosted discovery service on Docker Hub.It shows the output as:

12345678910111213141516docker run swarm createUnable to find image 'swarm:latest' locallylatest: Pulling from swarm55b38848634f: Pull completefd7bc7d11a30: Pull completedb039e91413f: Pull complete1e5a49ab6458: Pull complete5d9ce3cdadc7: Pull complete1f26e949f933: Pull completee08948058bed: Already existsswarm:latest: The image you are pulling has been verified. Important: image verification is a tech preview feature and should not be relied on to provide security.Digest: sha256:0e417fe3f7f2c7683599b94852e4308d1f426c82917223fccf4c1c4a4eddb8efStatus: Downloaded newer image for swarm:latest1d528bf0568099a452fef5c029f39b85The last line is the <TOKEN>.

Make sure to note this cluster id now as there is no means to list it later. This should be fixed with#661.

- Swarm is fully integrated with Docker Machine, and so is the easiest way to get started. Let’s create a Swarm Master next:

123docker-machine create -d virtualbox --swarm --swarm-master --swarm-discovery token://<TOKEN> swarm-master

Replace

<TOKEN>with the cluster id obtained in the previous step.--swarmconfigures the machine with Swarm,--swarm-masterconfigures the created machine to be Swarm master. Swarm master creation talks to the hosted service on Docker Hub and informs that a master is created in the cluster. - Connect to this newly created master and find some more information about it:

1234eval "$(docker-machine env swarm-master)"docker info

This will show the output as:

123456789101112131415161718192021222324252627282930> docker infoContainers: 2Images: 7Storage Driver: aufsRoot Dir: /mnt/sda1/var/lib/docker/aufsBacking Filesystem: extfsDirs: 11Dirperm1 Supported: trueExecution Driver: native-0.2Logging Driver: json-fileKernel Version: 4.0.5-boot2dockerOperating System: Boot2Docker 1.7.0 (TCL 6.3); master : 7960f90 - Thu Jun 18 18:31:45 UTC 2015CPUs: 1Total Memory: 996.2 MiBName: swarm-masterID: DLFR:OQ3E:B5P6:HFFD:VKLI:IOLU:URNG:HML5:UHJF:6JCL:ITFH:DS6JDebug mode (server): trueFile Descriptors: 22Goroutines: 36System Time: 2015-07-11T00:16:34.29965306ZEventsListeners: 1Init SHA1:Init Path: /usr/local/bin/dockerDocker Root Dir: /mnt/sda1/var/lib/dockerUsername: arunguptaRegistry: https://index.docker.io/v1/Labels:provider=virtualbox - Create a Swarm node

123docker-machine create -d virtualbox --swarm --swarm-discovery token://<TOKEN> swarm-node-01

Replace

<TOKEN>with the cluster id obtained in an earlier step.Node creation talks to the hosted service at Docker Hub and joins the previously created cluster. This is specified by

--swarm-discovery token://...and specifying the cluster id obtained earlier. - To make it a real cluster, let’s create a second node:

123docker-machine create -d virtualbox --swarm --swarm-discovery token://<TOKEN> swarm-node-02

Replace

<TOKEN>with the cluster id obtained in the previous step. - List all the nodes created so far:

123docker-machine ls

This shows the output similar to the one below:

123456789docker-machine lsNAME ACTIVE DRIVER STATE URL SWARMlab virtualbox Running tcp://192.168.99.101:2376summit2015 virtualbox Running tcp://192.168.99.100:2376swarm-master * virtualbox Running tcp://192.168.99.102:2376 swarm-master (master)swarm-node-01 virtualbox Running tcp://192.168.99.103:2376 swarm-masterswarm-node-02 virtualbox Running tcp://192.168.99.104:2376 swarm-masterThe machines that are part of the cluster have the cluster’s name in the SWARM column, blank otherwise. For example, “lab” and “summit2015” are standalone machines where as all other machines are part of the “swarm-master” cluster. The Swarm master is also identified by (master) in the SWARM column.

- Connect to the Swarm cluster and find some information about it:

1234eval "$(docker-machine env --swarm swarm-master)"docker info

This shows the output as:

1234567891011121314151617181920212223242526> docker infoContainers: 4Images: 3Role: primaryStrategy: spreadFilters: affinity, health, constraint, port, dependencyNodes: 3swarm-master: 192.168.99.102:2376└ Containers: 2└ Reserved CPUs: 0 / 1└ Reserved Memory: 0 B / 1.022 GiB└ Labels: executiondriver=native-0.2, kernelversion=4.0.5-boot2docker, operatingsystem=Boot2Docker 1.7.0 (TCL 6.3); master : 7960f90 - Thu Jun 18 18:31:45 UTC 2015, provider=virtualbox, storagedriver=aufsswarm-node-01: 192.168.99.103:2376└ Containers: 1└ Reserved CPUs: 0 / 1└ Reserved Memory: 0 B / 1.022 GiB└ Labels: executiondriver=native-0.2, kernelversion=4.0.5-boot2docker, operatingsystem=Boot2Docker 1.7.0 (TCL 6.3); master : 7960f90 - Thu Jun 18 18:31:45 UTC 2015, provider=virtualbox, storagedriver=aufsswarm-node-02: 192.168.99.104:2376└ Containers: 1└ Reserved CPUs: 0 / 1└ Reserved Memory: 0 B / 1.022 GiB└ Labels: executiondriver=native-0.2, kernelversion=4.0.5-boot2docker, operatingsystem=Boot2Docker 1.7.0 (TCL 6.3); master : 7960f90 - Thu Jun 18 18:31:45 UTC 2015, provider=virtualbox, storagedriver=aufsCPUs: 3Total Memory: 3.065 GiBThere are 3 nodes – one Swarm master and 2 Swarm nodes. There is a total of 4 containers running in this cluster – one Swarm agent on master and each node, and there is an additional swarm-agent-master running on the master.

- List nodes in the cluster with the following command:

123docker run swarm list token://<TOKEN>

This shows the output as:

123456> docker run swarm list token://1d528bf0568099a452fef5c029f39b85192.168.99.103:2376192.168.99.104:2376192.168.99.102:2376

Deploy Java EE Application to Docker Swarm Cluster using Docker Compose

Docker Compose to Orchestrate Containers explains how multi container applications can be easily started using Docker Compose.

- Use the

docker-compose.ymlfile explained in that blog to start the containers as:

12345docker-compose up -dCreating wildflymysqljavaee7_mysqldb_1...Creating wildflymysqljavaee7_mywildfly_1...

Thedocker-compose.ymlfile looks like:

123456789101112131415mysqldb:image: mysql:latestenvironment:MYSQL_DATABASE: sampleMYSQL_USER: mysqlMYSQL_PASSWORD: mysqlMYSQL_ROOT_PASSWORD: supersecretmywildfly:image: arungupta/wildfly-mysql-javaee7links:- mysqldb:dbports:- 8080:8080 - Check the containers running in the cluster as:

1234eval "$(docker-machine env --swarm swarm-master)"docker info

to see the output as:

1234567891011121314151617181920212223242526docker infoContainers: 7Images: 5Role: primaryStrategy: spreadFilters: affinity, health, constraint, port, dependencyNodes: 3swarm-master: 192.168.99.102:2376└ Containers: 2└ Reserved CPUs: 0 / 1└ Reserved Memory: 0 B / 1.022 GiB└ Labels: executiondriver=native-0.2, kernelversion=4.0.5-boot2docker, operatingsystem=Boot2Docker 1.7.0 (TCL 6.3); master : 7960f90 - Thu Jun 18 18:31:45 UTC 2015, provider=virtualbox, storagedriver=aufsswarm-node-01: 192.168.99.103:2376└ Containers: 2└ Reserved CPUs: 0 / 1└ Reserved Memory: 0 B / 1.022 GiB└ Labels: executiondriver=native-0.2, kernelversion=4.0.5-boot2docker, operatingsystem=Boot2Docker 1.7.0 (TCL 6.3); master : 7960f90 - Thu Jun 18 18:31:45 UTC 2015, provider=virtualbox, storagedriver=aufsswarm-node-02: 192.168.99.104:2376└ Containers: 3└ Reserved CPUs: 0 / 1└ Reserved Memory: 0 B / 1.022 GiB└ Labels: executiondriver=native-0.2, kernelversion=4.0.5-boot2docker, operatingsystem=Boot2Docker 1.7.0 (TCL 6.3); master : 7960f90 - Thu Jun 18 18:31:45 UTC 2015, provider=virtualbox, storagedriver=aufsCPUs: 3Total Memory: 3.065 GiB - “swarm-node-02” is running three containers and so lets look at the list of containers running there:

123eval "$(docker-machine env swarm-node-02)"

and see the list of running containers as:

1234567docker ps -aCONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMESb1e7d9bd2c09 arungupta/wildfly-mysql-javaee7 "/opt/jboss/wildfly/ 38 seconds ago Up 37 seconds 0.0.0.0:8080->8080/tcp wildflymysqljavaee7_mywildfly_1ac9c967e4b1d mysql:latest "/entrypoint.sh mysq 38 seconds ago Up 38 seconds 3306/tcp wildflymysqljavaee7_mysqldb_145b015bc79f4 swarm:latest "/swarm join --addr 20 minutes ago Up 20 minutes 2375/tcp swarm-agent - Application can then be accessed again using:

123curl http://$(docker-machine ip swarm-node-02):8080/employees/resources/employees

and shows the output as:

123<?<span class="pl-ent">xml</span><span class="pl-e"> version</span>=<span class="pl-s"><span class="pl-pds">"</span>1.0<span class="pl-pds">"</span></span><span class="pl-e"> encoding</span>=<span class="pl-s"><span class="pl-pds">"</span>UTF-8<span class="pl-pds">"</span></span><span class="pl-e"> standalone</span>=<span class="pl-s"><span class="pl-pds">"</span>yes<span class="pl-pds">"</span></span>?><<span class="pl-ent">collection</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>1</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Penny</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>2</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Sheldon</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>3</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Amy</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>4</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Leonard</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>5</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Bernadette</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>6</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Raj</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>7</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Howard</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>><<span class="pl-ent">employee</span>><<span class="pl-ent">id</span>>8</<span class="pl-ent">id</span>><<span class="pl-ent">name</span>>Priya</<span class="pl-ent">name</span>></<span class="pl-ent">employee</span>></<span class="pl-ent">collection</span>>

Latest instructions for this setup are always available at: github.com/javaee-samples/docker-java/blob/master/chapters/docker-swarm.adoc.

Enjoy!

ZooKeeper is an Apache project and provides a distributed, eventually consistent hierarchical configuration store.

ZooKeeper is an Apache project and provides a distributed, eventually consistent hierarchical configuration store.