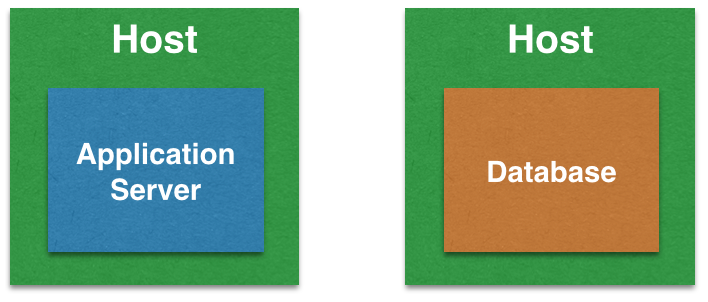

This blog will show how a simple Java application can talk to a database using service discovery in Kubernetes.

Service Discovery with Java and Database application in DC/OS explains why service discovery is an important aspect for a multi-container application. That blog also explained how this can be done for DC/OS.

Let’s see how this can be accomplished in Kubernetes with a single instance of application server and database server. This blog will use WildFly for application server and Couchbase for database.

This blog will use the following main steps:

- Start Kubernetes one-node cluster

- Kubernetes application definition

- Deploy the application

- Access the application

Start Kubernetes Cluster

Minikube is the easiest way to start a one-node Kubernetes cluster in a VM on your laptop. The binary needs to be downloaded first and then installed.

Complete installation instructions are available at github.com/kubernetes/minikube.

The latest release can be installed on OSX as as:

|

1

2

3

4

|

curl -Lo minikube https://storage.googleapis.com/minikube/releases/v0.17.1/minikube-darwin-amd64 \

&& chmod +x minikube

|

It also requires kubectl to be installed. Installing and Setting up kubectl provide detailed instructions on how to setup kubectl. On OSX, it can be installed as:

|

1

2

3

4

|

curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/darwin/amd64/kubectl \

&& chmod +x ./kubectl

|

Now, start the cluster as:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

|

minikube start

Starting local Kubernetes cluster...

Starting VM...

Downloading Minikube ISO

88.71 MB / 88.71 MB [==============================================] 100.00% 0s

SSH-ing files into VM...

Setting up certs...

Starting cluster components...

Connecting to cluster...

Setting up kubeconfig...

Kubectl is now configured to use the cluster.

|

The kubectl version command shows more details about the kubectl client and minikube server version:

|

1

2

3

4

5

|

kubectl version

Client Version: version.Info{Major:"1", Minor:"5", GitVersion:"v1.5.4", GitCommit:"7243c69eb523aa4377bce883e7c0dd76b84709a1", GitTreeState:"clean", BuildDate:"2017-03-07T23:53:09Z", GoVersion:"go1.7.4", Compiler:"gc", Platform:"darwin/amd64"}

Server Version: version.Info{Major:"1", Minor:"5", GitVersion:"v1.5.3", GitCommit:"029c3a408176b55c30846f0faedf56aae5992e9b", GitTreeState:"clean", BuildDate:"1970-01-01T00:00:00Z", GoVersion:"go1.7.3", Compiler:"gc", Platform:"linux/amd64"}

|

More details about the cluster can be obtained using the kubectl cluster-info command:

|

1

2

3

4

5

6

7

|

Kubernetes master is running at https://192.168.99.100:8443

KubeDNS is running at https://192.168.99.100:8443/api/v1/proxy/namespaces/kube-system/services/kube-dns

kubernetes-dashboard is running at https://192.168.99.100:8443/api/v1/proxy/namespaces/kube-system/services/kubernetes-dashboard

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

|

Kubernetes Application Definition

Application definition is defined at github.com/arun-gupta/kubernetes-java-sample/blob/master/service-discovery.yml. It consists of:

- A Couchbase service

- Couchbase replica set with a single pod

- A WildFly replica set with a single pod

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

|

apiVersion: v1

kind: Service

metadata:

name: couchbase-service

spec:

selector:

app: couchbase-rs-pod

ports:

- name: admin

port: 8091

- name: views

port: 8092

- name: query

port: 8093

- name: memcached

port: 11210

---

apiVersion: extensions/v1beta1

kind: ReplicaSet

metadata:

name: couchbase-rs

spec:

replicas: 1

template:

metadata:

labels:

app: couchbase-rs-pod

spec:

containers:

- name: couchbase

image: arungupta/couchbase:travel

ports:

- containerPort: 8091

- containerPort: 8092

- containerPort: 8093

- containerPort: 11210

---

apiVersion: extensions/v1beta1

kind: ReplicaSet

metadata:

name: wildfly-rs

labels:

name: wildfly

spec:

replicas: 1

template:

metadata:

labels:

name: wildfly

spec:

containers:

- name: wildfly-rs-pod

image: arungupta/wildfly-couchbase-javaee:travel

env:

- name: COUCHBASE_URI

value: couchbase-service

ports:

- containerPort: 8080

|

COUCHBASE_URI environment variable is name of the Couchbase service. This allows the application deployed in WildFly to dynamically discovery the service and communicate with the database.

arungupta/couchbase:travel Docker image is created using github.com/arun-gupta/couchbase-javaee/blob/master/couchbase/Dockerfile.

arungupta/wildfly-couchbase-javaee:travel Docker image is created using github.com/arun-gupta/couchbase-javaee/blob/master/Dockerfile.

Java EE application waits for database initialization to be complete before it starts querying the database. This can be seen at github.com/arun-gupta/couchbase-javaee/blob/master/src/main/java/org/couchbase/sample/javaee/Database.java#L25.

Deploy Application

This application can be deployed as:

|

1

2

3

|

kubectl create -f ~/workspaces/kubernetes-java-sample/service-discovery.yml

|

The list of service and replica set can be shown using the command kubectl get svc,rs:

|

1

2

3

4

5

6

7

8

9

10

|

NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

svc/couchbase-service 10.0.0.97 <none> 8091/TCP,8092/TCP,8093/TCP,11210/TCP 27m

svc/kubernetes 10.0.0.1 <none> 443/TCP 1h

svc/wildfly-rs 10.0.0.252 <none> 8080/TCP 21m

NAME DESIRED CURRENT READY AGE

rs/couchbase-rs 1 1 1 27m

rs/wildfly-rs 1 1 1 27m

|

Logs for the single replica of Couchbase can be obtained using the command kubectl logs rs/couchbase-rs:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

++ set -m

++ sleep 25

++ /entrypoint.sh couchbase-server

Starting Couchbase Server -- Web UI available at http://<ip>:8091 and logs available in /opt/couchbase/var/lib/couchbase/logs

++ curl -v -X POST http://127.0.0.1:8091/pools/default -d memoryQuota=300 -d indexMemoryQuota=300

. . .

{"storageMode":"memory_optimized","indexerThreads":0,"memorySnapshotInterval":200,"stableSnapshotInterval":5000,"maxRollbackPoints":5,"logLevel":"info"}[]Type:

++ echo 'Type: '

++ '[' '' = WORKER ']'

++ fg 1

/entrypoint.sh couchbase-server

|

Logs for the WildFly replica set can be seen using the command kubectl logs rs/wildfly-rs:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

|

=========================================================================

JBoss Bootstrap Environment

JBOSS_HOME: /opt/jboss/wildfly

. . .

06:32:08,537 INFO [com.couchbase.client.core.node.Node] (cb-io-1-1) Connected to Node couchbase-service

06:32:09,262 INFO [com.couchbase.client.core.config.ConfigurationProvider] (cb-computations-3) Opened bucket travel-sample

06:32:09,366 INFO [stdout] (ServerService Thread Pool -- 65) Sleeping for 3 secs ...

06:32:12,369 INFO [stdout] (ServerService Thread Pool -- 65) Bucket found!

06:32:14,194 INFO [org.jboss.resteasy.resteasy_jaxrs.i18n] (ServerService Thread Pool -- 65) RESTEASY002225: Deploying javax.ws.rs.core.Application: class org.couchbase.sample.javaee.MyApplication

06:32:14,195 INFO [org.jboss.resteasy.resteasy_jaxrs.i18n] (ServerService Thread Pool -- 65) RESTEASY002200: Adding class resource org.couchbase.sample.javaee.AirlineResource from Application class org.couchbase.sample.javaee.MyApplication

06:32:14,310 INFO [org.wildfly.extension.undertow] (ServerService Thread Pool -- 65) WFLYUT0021: Registered web context: /airlines

06:32:14,376 INFO [org.jboss.as.server] (ServerService Thread Pool -- 34) WFLYSRV0010: Deployed "airlines.war" (runtime-name : "airlines.war")

06:32:14,704 INFO [org.jboss.as] (Controller Boot Thread) WFLYSRV0060: Http management interface listening on http://127.0.0.1:9990/management

06:32:14,704 INFO [org.jboss.as] (Controller Boot Thread) WFLYSRV0051: Admin console listening on http://127.0.0.1:9990

06:32:14,705 INFO [org.jboss.as] (Controller Boot Thread) WFLYSRV0025: WildFly Full 10.1.0.Final (WildFly Core 2.2.0.Final) started in 29470ms - Started 443 of 691 services (404 services are lazy, passive or on-demand)

|

Access Application

The kubectl proxy command starts a proxy to the Kubernetes API server. Let’s start a Kubernetes proxy to access our application:

|

1

2

3

4

|

kubectl proxy

Starting to serve on 127.0.0.1:8001

|

Expose the WildFly replica set as a service using:

|

1

2

3

|

kubectl expose --name=wildfly-service rs/wildfly-rs

|

The list of services can be seen again using kubectl get svc command:

|

1

2

3

4

5

6

7

|

kubectl get svc

NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

couchbase-service 10.0.0.97 <none> 8091/TCP,8092/TCP,8093/TCP,11210/TCP 41m

kubernetes 10.0.0.1 <none> 443/TCP 1h

wildfly-service 10.0.0.169 <none> 8080/TCP 5s

|

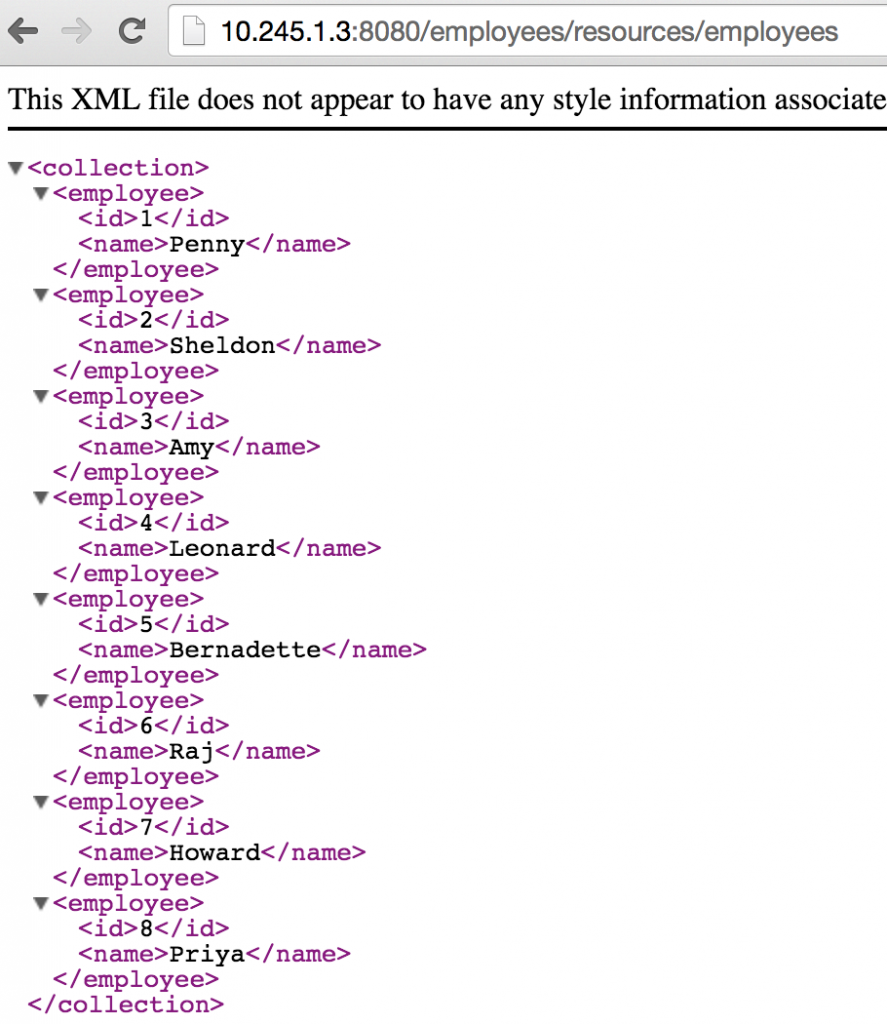

Now, the application is accessible at:

|

1

2

3

|

curl http://localhost:8001/api/v1/proxy/namespaces/default/services/wildfly-service/airlines/resources/airline

|

A formatted output looks like:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

|

[

{

"travel-sample": {

"country": "United States",

"iata": "Q5",

"callsign": "MILE-AIR",

"name": "40-Mile Air",

"icao": "MLA",

"id": 10,

"type": "airline"

}

},

{

"travel-sample": {

"country": "United States",

"iata": "TQ",

. . .

"name": "Airlinair",

"icao": "RLA",

"id": 1203,

"type": "airline"

}

}

]

|

Now, new pods may be added as part of Couchbase service by scaling the replica set. Existing pods may be terminated or get rescheduled. But the Java EE application will continue to access the database service using the logical name.

This blog showed how a simple Java application can talk to a database using service discovery in Kubernetes.

For further information check out:

- Kubernetes Docs

- Couchbase on Containers

- Couchbase Developer Portal

- Ask questions on Couchbase Forums or Stack Overflow

- Download Couchbase

ZooKeeper is an Apache project and provides a distributed, eventually consistent hierarchical configuration store.

ZooKeeper is an Apache project and provides a distributed, eventually consistent hierarchical configuration store.