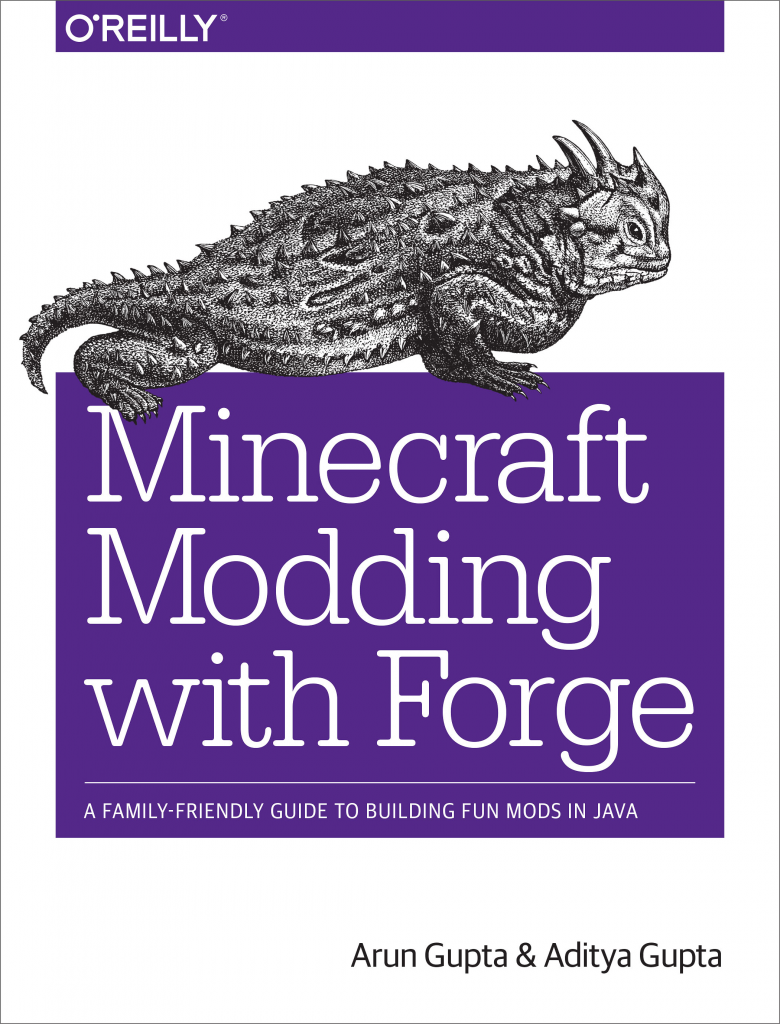

Would you like to learn Minecraft Modding in a family-friendly way?

Don’t have any previous programming experience?

Never programmed in Java?

This new O’Reilly book on Minecraft Modding with Forge is targeted at parents and kids who would like to learn how to create new Minecraft mods. It can be read by parents or kids independently, and is more fun when they read it together. No prior programming experience is required however some familiarity with software installation would be very helpful.

Release Date: April 2015

Language: English

Pages: 194

Print ISBN:978-1-4919-1889-0| ISBN 10:1-4919-1889-6

Ebook ISBN:978-1-4919-1883-8| ISBN 10:1-4919-1883-7

Minecraft is commonly associated with “addiction”. This book hopes to leverage the passionate kids and teach them how to do Minecraft Modding, and in the process teach some fundamental Java concepts. They also pick up basic Eclipse skills as well.

It uses Minecraft Forge and shows how to create over two dozen mods. Here is the complete Table of Content:

| Chapter 1 | Introduction |

| Chapter 2 | Block Break Message |

| Chapter 3 | Fun with Explosions |

| Chapter 4 | Entities |

| Chapter 5 | Movement |

| Chapter 6 | New Commands |

| Chapter 7 | New Block |

| Chapter 8 | New Item |

| Chapter 9 | Recipes and Textures |

| Chapter 10 | Sharing Mods |

| Appendix A | What is Minecraft? |

| Appendix B | Eclipse Shortcuts and Correct Imports |

| Appendix C | Downloading the Source Code from GitHub |

| Appendix D | Devoxx4Kids |

Each chapter also provide several additional ideas on what readers can try based upon what they learned.

It has been an extremely joyful and rewarding experience to co-author the book with my 12-year old son. Many thanks to O’Reilly for providing this opportunity of a lifetime experience to us.

Here is the effort distribution by different collaborators on the book:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

|

Total number of files: 177

Total number of lines: 4,843

Total number of commits: 1,266

+------------------------+-------+---------+-------+--------------------+

| name | loc | commits | files | distribution |

+------------------------+-------+---------+-------+--------------------+

| Aditya Gupta | 1,816 | 185 | 17 | 37.5 / 14.6 / 9.6 |

| kxxxxxx@oreilly.com | 1,269 | 697 | 27 | 26.2 / 55.1 / 15.3 |

| arun-gupta | 1,084 | 159 | 21 | 22.4 / 12.6 / 11.9 |

| Chris Pappas | 379 | 16 | 25 | 7.8 / 1.3 / 14.1 |

| xxxxxxxxxxxx@gmail.com | 191 | 164 | 14 | 3.9 / 13.0 / 7.9 |

| xxxxxxx@oreilly.com | 67 | 12 | 3 | 1.4 / 0.9 / 1.7 |

| xxxxxx@oreilly.com | 26 | 15 | 2 | 0.5 / 1.2 / 1.1 |

| xxxxxxxxxx@oreilly.com | 6 | 1 | 1 | 0.1 / 0.1 / 0.6 |

| Arun Gupta | 5 | 2 | 2 | 0.1 / 0.2 / 1.1 |

| Kristen Brown | 0 | 11 | 0 | 0.0 / 0.9 / 0.0 |

| xxxxxxxxx@oreilly.com | 0 | 2 | 0 | 0.0 / 0.2 / 0.0 |

| vagrant | 0 | 2 | 0 | 0.0 / 0.2 / 0.0 |

+------------------------+-------+---------+-------+--------------------+

|

The book is available in print and ebook and can be purchased from shop.oreilly.com/product/0636920036562.do.

Three reviews so far are all five star and so that is encouraging:

Its also marked as #1 hot new release at Amazon in Game Programming:

Scan the QR code to get the URL on your favorite device and give the first Java programming lesson to your kid – the Minecraft Way. They are going to thank you for that!

Happy modding and looking forward to your reviews.

Hail Spigot for reviving Bukkit, and updating to 1.8.3!

Hail Spigot for reviving Bukkit, and updating to 1.8.3!

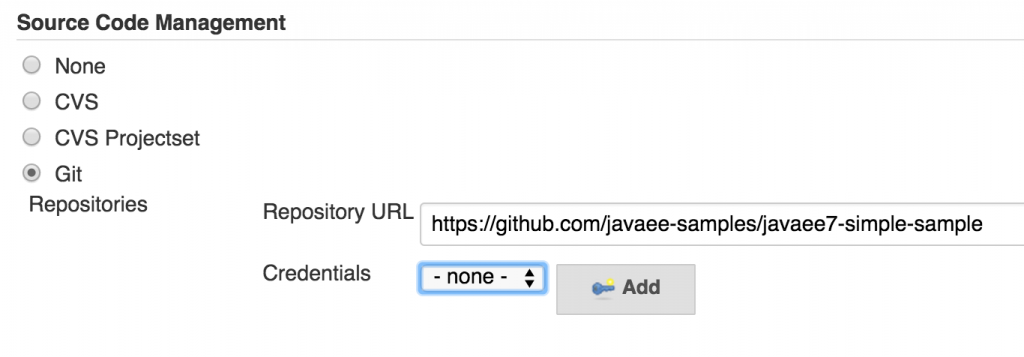

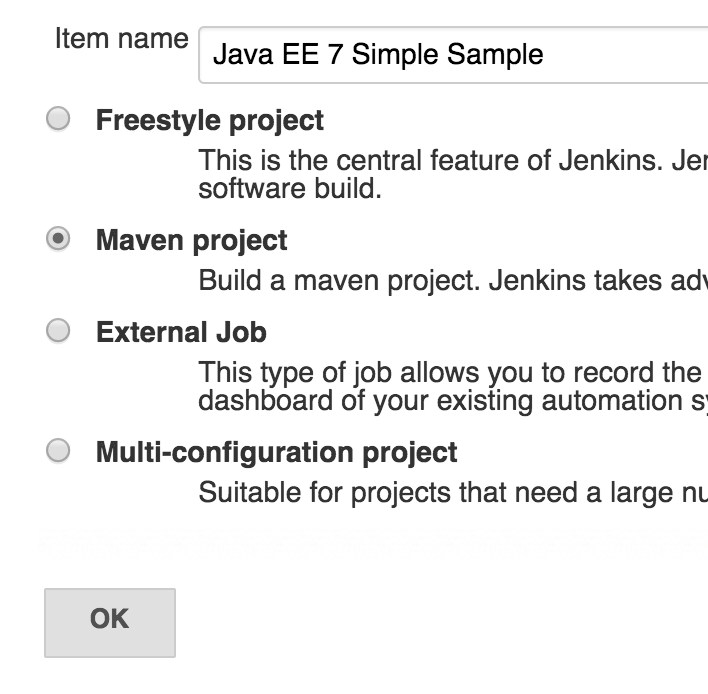

Click on “OK”.

Click on “OK”.